The Ultimate Guide to Web Scraping: What is Web Scraping?

Sergiu Inizian on Mar 19 2021

Knowledge is power, a wise man once said. But in today’s fast-paced world, information and data are the true power. If you are starting a business or want to scale one, having the numbers by your side will always be a great ace in the hole.

With zillions of websites to access in order to gather information, doing this the hard way is going to take a while.

Copy-paste work on every relevant website so you can process all the needed data for an informed decision wastes both time and resources.

So you are going to definitely miss the opportunity.

But how can you get your data easy and in no time? Let’s find out:

What is web scraping?

Web Scraping (also known as Web Data Extraction or Web Harvesting) is an automatic process of collecting structured web data run by bots. But let’s start easy.

The science behind web scraping is about extracting HTML code and, with it, most of the stored data in a database, from any public website. Then, the scraper can replicate the entire website content elsewhere, in different types of files, giving you access to immediate information right on your computer.

Magic, right? Suddenly, competitors’ prices, lead generation, or market research are just two clicks away, improving the speed and precision of the decision-making process.

The Internet doesn’t feel infinite anymore.

How does web scraping really work?

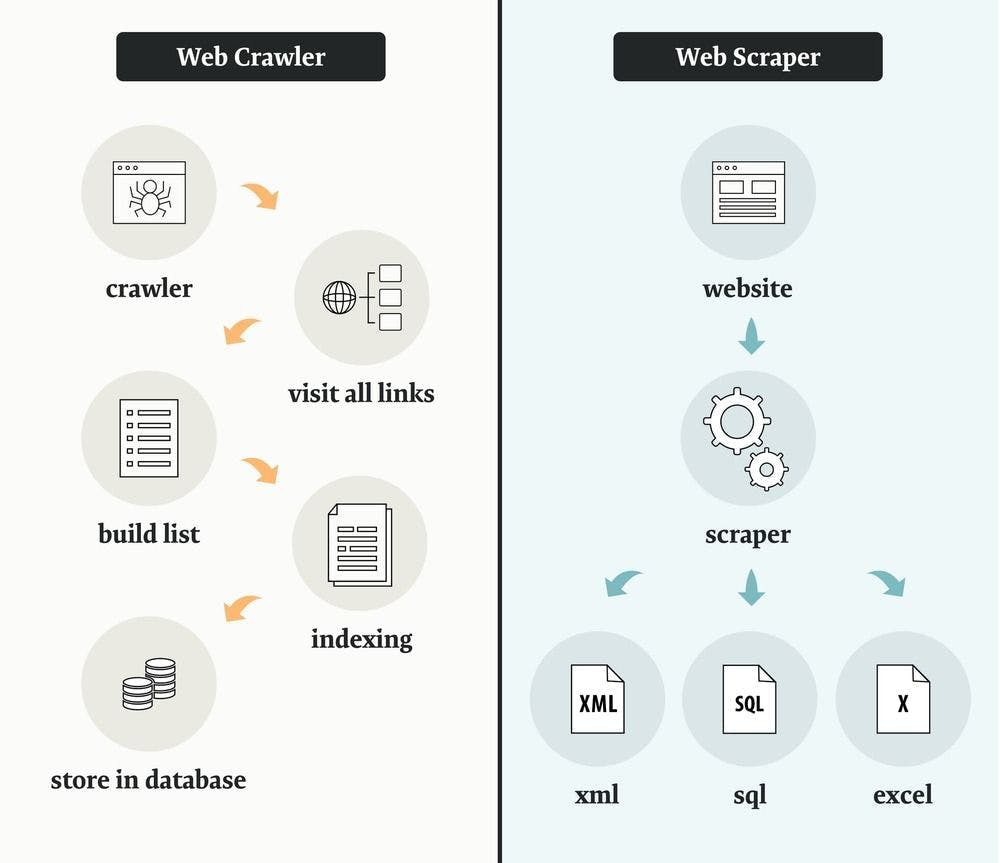

The recipe for a successful web scraping process includes two main ingredients: a crawler and a scraper. The crawler is the mother who takes her child to every candy shop that has specific chocolate types, and the scraper is the kid that takes them from the shelf and puts them in the basket. In other words, the crawler guides the scraper all over the Internet, where it extracts the needed data.

But let’s make it clearer.

The crawler

The web crawler, aka the spider, is an AI program that systematically browses the internet to create an index of data. It also searches for content by following links and exploring, just like someone who has a lot of free time and continues browsing from link to link. For the web scraping process, usually you “crawl” for different websites and URLs that match your criteria, and then you pass it on to your scraper.

The scraper

The web scraper is a specialized software tool programmed to sift through databases and quickly extract accurate information from any public web page.

You will find differently designed web scrapers on the market, depending on the complexity of your needs. But the most important feature of a web scraper, that you definitely need to have in mind, is the data locators or selectors.

These data locators (selectors) are the ones that find the requested data you need and extract it from the HTML file. The usual formats in which the data is being extracted through a web scraper are JSON, CSV, XML, or just a simple spreadsheet.

After you’ve downloaded all the information you need, the web scraper’s business is done. It’s just this easy.

What is the web scraping process?

There are different ways for you to gain access to web scraped data, depending on your needs, the size of the project, or the amount of needed data.

You can do it yourself (if you have the time and energy to spend)

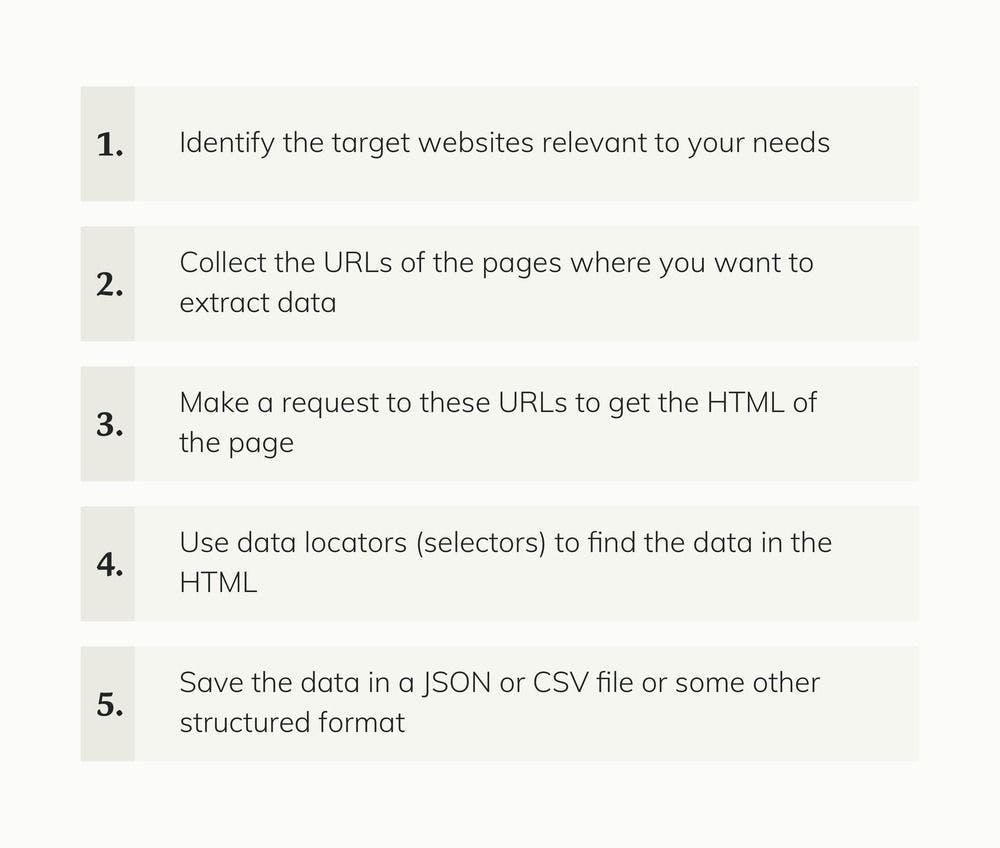

There are five general steps that get you closer to your web data:

We recommend using the Do it yourself path when talking about a small-scale project where little data is needed.

If you want to scale or your project requires lots of web data, there will be some technical challenges that can demand a lot of time and resources. Some of them are: maintaining the scraper if the website layout changes, managing proxies, executing javascript, or working around antibots. The programming knowledge is directly related to the complexity of the scraper.

That’s why most businesses choose to outsource their web scraping projects to specialized providers with pre-built software that you can access immediately by downloading.

But things are getting easier.

You can outsource it

Let’s take WebScrapingAPI as an example. This product works as a service you don’t have to download, install, or set it up, and it comes with lots of benefits.

- It's easy - all you have to do is create an account on webscrapingapi.com and send your first request.

- It's reliable - you won’t have to deal with CAPTCHAs, proxies, Java rendering, or IP rotations because WebScrapingAPI manages in the backend all the possible blockers.

- It's customizable - you can choose many of the details of your requests (headers, IP geolocation, sticky sessions, and much more).

Bonus point: you will receive for free 1000 API calls and all the web data requested in JSON format.

All these features help you save a lot of time while doing web scraping by giving you access to data within seconds. Plus, it solves problems other products can’t, by using the latest technologies available, powered by Amazon Web Services and with millions of API requests served every month.

In which cases web scraping might help you?

Price Intelligence - information about price and products

One of the main cases in which entrepreneurs or businesses decide to use web scraping technology is to gather information regarding competitors’ prices and product information like available stock or product description. This is a common practice that can ensure business growth and continuity by automating your pricing strategies and market positioning.

Frequent uses of web scraping tools in price intelligence include:

- dynamic pricing

- revenue optimization

- competitor monitoring

- product trend monitoring

- brand and MAP (minimum advertised price) compliance

Financial data

The process of making informed investment decisions can be a very time-consuming one. Use web scraping as a strategic value to ease the process and make informed decisions based on authentic data available online and compile different sources of information to assess risks and opportunities.

By using web scraping for financial data you can:

- extract insights from SEC filings

- estimate company fundamentals

- have an overview of public sentiment

- monitor the news

Market research

When starting a business or scaling one, market research is a vital source of information, particularly incomplex industries. The more, the better. Through web scraping you can access high quality, high volume, and highly insightful web data that can be an important turning point:

- market trend analysis

- market pricing

- optimizing point of entry

- research & development

- competitor monitoring

Real estate

This industry has known a digital transformation, which led to a disruption of traditional companies. As in every other industry, available data helps agents and brokerages to make informed decisions within the market.

Web scraping helps businesses:

- appraise property value

- monitor vacancy rates

- estimate rental yields

- understand market direction

Lead generation

Finding clients is a challenge in this unstable economy and every advantage matters. Web scraping helps businesses by allowing them access to structured and accurate lead lists from industries, locations and filtered by any existing needs.

Customer reviews

People’s opinions and feelings about a business can have a great impact on any decision-making process. That is why it’s easier now to access available data from all over the Internet in order to know customers’ needs and expectations.

Learn more

WebScrapingAPI comes to address issues that have never been addressed before and solves them in an intelligent way. We’re putting the customer in the center, so that the web scraping process can be easier, faster, and, in the end, a higher-quality product.

That’s why your first 1000 API calls are free. See for yourself that having the Internet at your fingertips has never been easier!

If you want to learn more about web scraping and WebScrapingAPI, here are some resources you can access for free:

News and updates

Stay up-to-date with the latest web scraping guides and news by subscribing to our newsletter.

We care about the protection of your data. Read our Privacy Policy.

Related articles

A reliable proxy pool is just the first step towards web scraping greatness. The next one is rotating those proxies. Here's what you need to know!

Find out how a web scraper service can help you gain valuable insights and re-orient your marketing strategy for increasing profits.

How can you get data in a simple, fast, and efficient way? Web scraping, of course. But what are the benefits? Discover them here.